We need Encrypted Data Availability (+ mempools), and the Death of PBS

Why decentralising your proposer set without encryption is the worst possible architecture for minimising MEV (+ how encryption helps w/o MCP), and why PBS has no place in an MCP world.

If you wanna help share the word about this article, feel free to interact w/ this tweet:

Quick side note: Encrypted/Private Mempools are not just needed for MCP setups, but also for live blockchain systems today - as Julian Ma eloquently puts it, it can solve/help on many fronts - especially ensuring that the entirety of DeFi happens on-chain (and not party off-chain). I would recommend reading his flurry of tweets: here, here and here.

Many moons ago, we wrote a blogpost about the mechanisms and setups of MEV in a modular ecosystem. In that piece, we had a section aptly named data availability MEV. There, we mentioned that most MEV (if not all, barring specific cross-domain situations) would likely end up in the execution layer, where leader election happens, since they decide inclusion and ordering. However, over the last few weeks I’ve had several discussions in which the idea of DA MEV has come up. Funnily enough, not in the context of modularity, but rather on monolithic L1s (specifically ones utilising multi-concurrent proposers (MCP)).

With this newfound context, we thought it would be fruitful to dive a little bit deeper into this topic, alongside other interesting mechanical changes coming to several more modern chains, and their effects on MEV.

In my last article, we focused on the development of multiple concurrent proposers (MCP) and their possible effects not only on censorship resistance, but also on throughput and customisation. An interesting (and somewhat underexplored) aspect of MCP is its potential effect on MEV, specifically on the data-availability side.

If you need a quick primer on data availability: DA allows us to check with a very high probability that all the data for a block has been published. Data availability is required to be able to detect fraud and also to recreate the entirety of the chain.

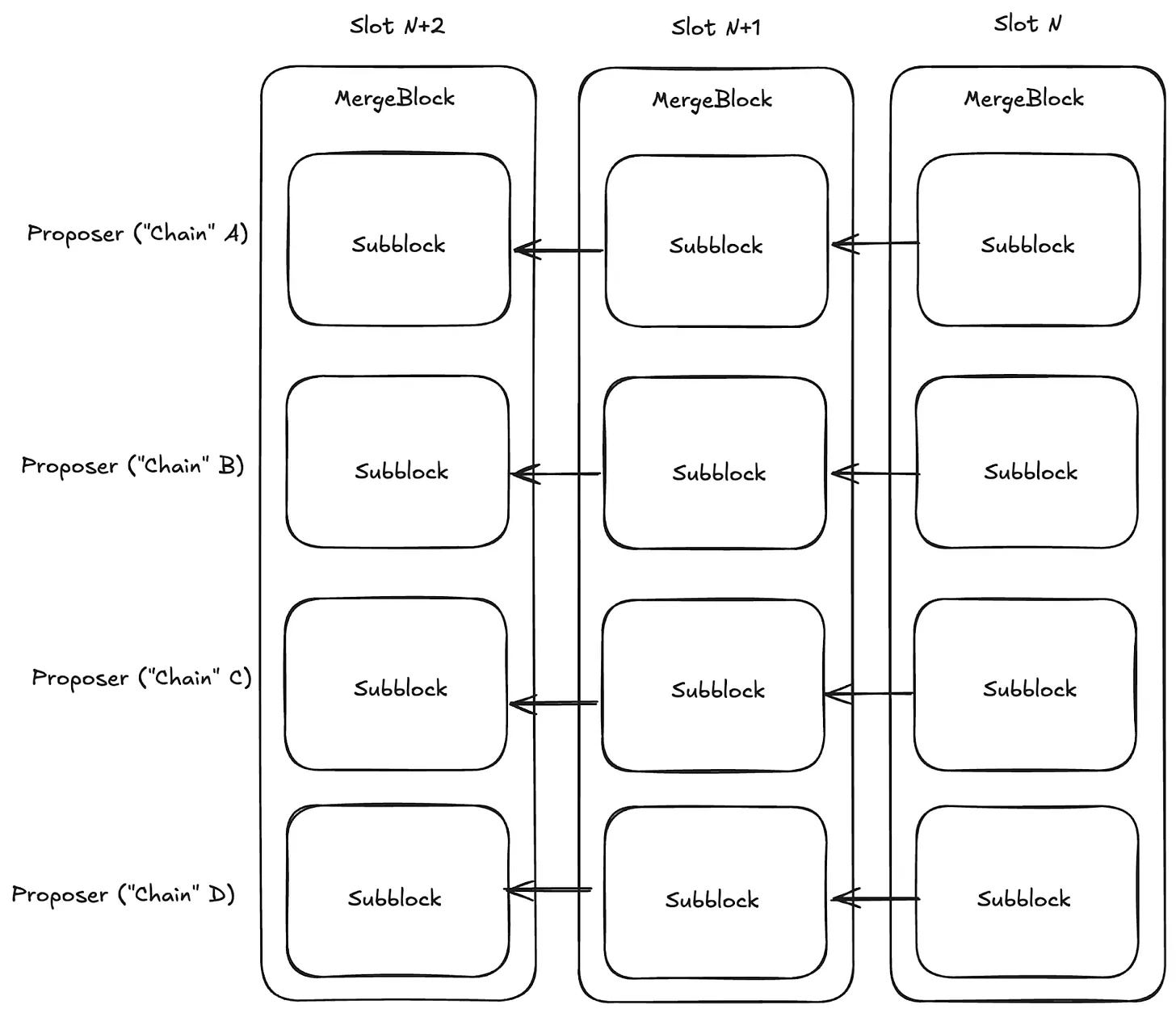

And a primer on MCP; instead of a singular proposer deciding what block gets proposed, multiple concurrent proposers contribute mini-blocks that get merged into a singular, larger one.

The DA MEV Problem

In setups with delayed execution, where transaction inputs are published before execution (MCP), there’s a high probability that MEV-prone validators could participate in transaction stealing by taking transactions from the DA layer and engaging in front-running.

For example, a validator (or a set of them) could collaborate in playing timing games, waiting for bids and then submitting higher ones. This is especially important in cases where the DA layer does not have the ability to Replace-by-Fee (RBF). Meaning you could see one validator’s set of transactions published first, take the ones you deem valuable and outbid for execution.

While DA generally doesn’t have a significant impact on MEV in most cases, due to the absence of leader election (in most L2s) and the fact that a single leader is chosen in advance on most L1s. However, MCP changes this equation entirely. MCP introduces several concurrent leaders. And with several concurrent leaders all observing a public mempool, you get a multiplicative effect on the MEV attack surface.

A possible solution to these types of attacks is to encrypt the data being made available in relation to the transactions about to be executed and put into a confirmed block. The reason for doing this is to reduce the potential for MEV games arising from the introduction of more novel mechanism designs. However, most methods for achieving encrypted DA are likely to lead to an increase in latency, as both ZK and FHE have considerable compute overhead (although we’re closer to closing the gap with ZK). There are, however, other ways to get to the same goal.

Encrypted DA

The core idea is straightforward: utilise cryptography (or hardware) to hide transaction content until the finalised ordering of transactions in a block is irrevocably committed. This removes the ability to steal transactions and engage in timing games. One thing to keep in mind, though, is that while providing encryption, we also want to limit the latency we introduce into the system.

There are several methods you could utilise to achieve this, each with different tradeoffs and properties. Let’s explore the main ones:

1. Threshold Encryption (e.g., Shutter Network)

Transactions are encrypted to a threshold committee; the decryption key is released only after block ordering is committed. Shutter is already live on Gnosis Chain, providing the closest real-world validation of this approach.

The tradeoff: liveness depends on the threshold committee. If t-of-n participants are offline or colluding, decryption stalls.

2. Verifiable Encryption + Private DA (VE-PDA)

Users encrypt transactions but attach a ZK proof that the ciphertext is valid (correct format, sufficient gas, no double-spend). Validators can verify correctness without seeing content. Celestia’s Private Blockspace proposal extends this to the DA layer, where blobs are encrypted at submission and are decryptable only post-ordering.

The tradeoff: ZK proof generation adds user-side latency (seconds on consumer hardware today); proving overhead is the main bottleneck.

3. Trusted Execution Environments (TEEs)

Hardware-based: transactions flow through secure enclaves (SGX/ TXD etc) that order before revealing content. Explored by Flashbots in their anonymised mempool post.

The tradeoff: the trust assumption shifts entirely to the hardware manufacturer (Intel) and the supply chain and of possible physical attacks (maybe we put them Space? But latency.. see Spacecomputer!) SGX has a well-documented history of side-channel attacks (Spectre, Plundervolt).

4. Delayed Reveal / Commit-Reveal Schemes

The simplest approach: users commit a hash of their transaction, ordering is determined, then plaintext is revealed.

The tradeoff: two-round latency doubles block time, and users can grief by never revealing. Celestia’s account-centric private blockspace proposes a variant where reveal is enforced via stake slashing.

5. Time-Lock Puzzles / Timed-Release Encryption

A transaction is encrypted such that it becomes computable to decrypt only after X seconds — no key ceremony needed.

The tradeoff: computationally expensive, and adversaries with faster hardware can decrypt early. Not yet practical at blockchain timescales.

Public vs. Encrypted DA

Let’s make the contrast concrete.

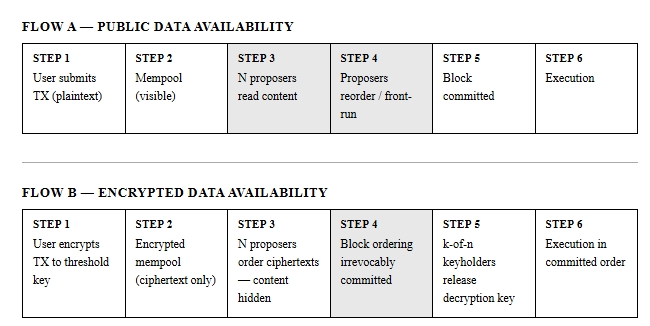

Under public DA: A proposer sees swap(USDC→ETH, amountIn=50000) in the mempool. They can immediately construct a front-run buy and back-run sell around it. The content of the transaction is what enables the attack. However, on Ethereum, a very large portion of today’s order flow is private, and what actually goes on behind the scenes is less than clear - but it does mitigate some of the issues (but not in a verifiable, decentralised nor trustless manner.

Under encrypted DA: The proposer sees 0x9f3a...c2b1, a ciphertext. They have no idea if it’s a swap, transfer or a contract deployment. They cannot construct a sandwich because they don’t know the token pair, direction, size, or anything else meaningful. The ordering commitment happens before decryption. Once the block order is irrevocably finalised on-chain, the decryption key is released and transactions execute in that committed order. At that point there is nothing to front-run; the ordering is already done. However, efficiently constructing a block becomes significantly more difficult (if not impossible) since the proposer has no clue about how much gas a specific transaction will consume (and if he knows, then he will also have an idea of what type of transaction is committed).

Quantifying the MEV under MCP

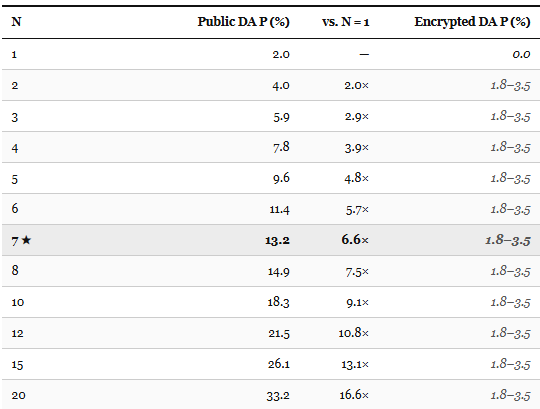

Let’s look at some data to understand just how needed encrypted DA is (especially in a multi-proposer situation).

*7 proposers is the proposed number of Sei Giga proposers in the only live MCP system

The probability of a sandwich attack per transaction on Ethereum accounts for around 2%. The true figure likely sits between 2% and 12% per block, depending on whether the base is all DEX swaps, or only swaps above the minimum profitable size threshold. Public DA values are lower bounds; however, it could be much higher because of structural MCP-specific mechanisms such as coordination breakdown, timing games, and duplicate inclusion which compound (we’ll get into these type of attacks further on).

Under Proposer-Builder Separation, it is block builders, not proposers, that execute sandwiches; however, we’ll get further into MCP vs PBS further on in the article. Flashbots’ BuilderNet partially addresses builder centralisation but redistributes rather than eliminates MEV, leaving the extraction mechanism intact.

Some important caveats on this model. It assumes proposers act independently, whereas in reality, they likely coordinate or bid through the same MEV infrastructure (like MEV-Boost relays), which changes the math. The strongest real-world evidence for encrypted DA solving sandwich attack iis essentially zero sandwich attacks on rollups with private mempools.

The 1.8-3.5% floor for encrypted DA that we model is illustrative. It would depend on relay-level leakage (if MEV-Boost or equivalent infrastructure sees plaintext before encryption, a possibility in TEE-based schemes), timing attacks (the existence and size of a ciphertext can leak information even if content is hidden), and statistical inference (high-frequency traders can sometimes infer transaction content from size + timing patterns on known protocols).

As for why encryption eliminates the content-visibility attack vector, the Flashbots anonymised mempools paper directly argues this. Without content visibility, front-running requires guessing rather than reading. Shutter Network’s deployed implementation on Gnosis Chain shows near-zero sandwich attacks on encrypted transactions in practice.

Why does MCP increase the chance of attacks (in this case, sandwich attacks)?

On Ethereum today, many searchers compete to find and submit sandwich bundles, but they all route through the single builder who wins the block-building action and the single proposer who proposes it. The builder acts as a coordinator and filter — only one sandwich bundle is executed per victim transaction, because only one block is finalised. The many searchers compete with each other to get their bundle picked, bidding away profits to the builder via gas. You’re not adding new searchers, you’re adding new block assemblers that the same pool of searchers can already route to.

The real reasons MCP with public DA increases MEV risk are structural and specific to concurrent block production. Three matter most. First, timing games: with N proposers all watching the same mempool and each other’s DA proofs, the game-theoretic equilibrium shifts toward delay wherein proposers hold back their block to observe competitors, widening the extraction window for every pending transaction. Second, same-tick duplicate inclusion: multiple proposers can independently include the same victim transaction in concurrent block proposals, creating races in which several sandwich attempts land in the same slot, and the victim’s transaction is manipulated by more than one actor before consensus resolves which block wins. Third, coordination breakdown: the current builder is a centralised coordinator that picks the single best bundle — under MCP, that coordination disappears, and the absence of a chokepoint means chaotic multi-party extraction rather than the orderly, profit-maximising single extraction you get today.

Concurrent proposers need encrypted DA

A protocol that decentralises its proposer set without introducing encrypted DA will temporarily be subject to arguably the worst possible MEV environment. Which is the predicted outcome of applying the sandwich model to any N > 1 public-DA MCP system.

The only way to reach “low” amounts of MEV with high decentralisation in the block building is encrypted MCP (if you just encrypt block building, the public DA is still possible to attack). Encryption collapses the content attack window to near zero. The residual reflects side-channel leakage from transaction size and timing signals (more on this below) and MCP-specific timing-game effects that persist even after content is hidden. However, despite that, you still achieve lower sandwich MEV than Ethereum while providing materially stronger guarantees of censorship resistance via MCP

As such, encrypted DA feels less like an optionality and more of an architectural need to prevent decentralisation from amplifying MEV.

MCP-specific MEV Attacks:

Landers & Marsh formalise some rather interesting MEV-attacks that are introduced in an MCP context:

Same-tick duplicate steals: Proposer B copies a high-value transaction from Proposer A’s block and includes it in their concurrent block for the same tick. A loses the fee.

Proposer-to-proposer auction race: N proposers race to include the highest-value pending transaction, creating an implicit priority-fee auction without a formal builder market.

Proof-of-availability timing game: all collaborating proposers delay block release to the last moment T to observe competitors’ DA proofs. This creates a systemic latency floor. Encrypted DA eliminates content leakage but not the timing signal itself.

Threshold key release delay: If keyholders are bribed by a block proposer, a colluding subset could delay decryption after ordering commitment, enabling last-second content observation.

If you’re interested in how to mitigate these, I’d recommend reading the article linked above.

One very interesting aspect of the article is that in most cases, encrypted MEV does not fully eliminate timing games itself, and likely there’s a need for both encryption and priority DAGs.

The Death of PBS in an MCP world

The issues of MEV eventually led to the introduction of proposer-builder separation in Ethereum, as PGA wars heated up. The fair-exchange problem between the builder and the proposer (solved by the “trusted” relay) remains a persistent issue. However, in a world with MCP, PBS is unnecessary and probably harmful.

This is primarily because the properties gained from MCP are similar to those from PBS (currently implemented outside the base protocol), but are based on in-protocol rules rather than external ones.

PBS was necessary due to the market dynamics of the Ethereum block-building and validation phase. However, in a world where not a singular validator has control over what block gets proposed (and where the fair exchange problem isn’t as big a problem), there is no need to split block building from validation - since optimisation and performance won’t matter as much (you can get included anywhere).

PBS essentially removes the probabilistic censorship-resistance benefits gained from MCP, which would be a step in the wrong direction (unless you then start working on the fair exchange problem, but again, you’ve already solved this with MCP). What PBS does do well is force highly optimised building, but at the cost of centralisation (with the gain of performance). This single-builder censorship also reduces the inclusion probability. Furthermore, running a PBS auction with several validators rather than one is a whole different beast; the economics are not the same.

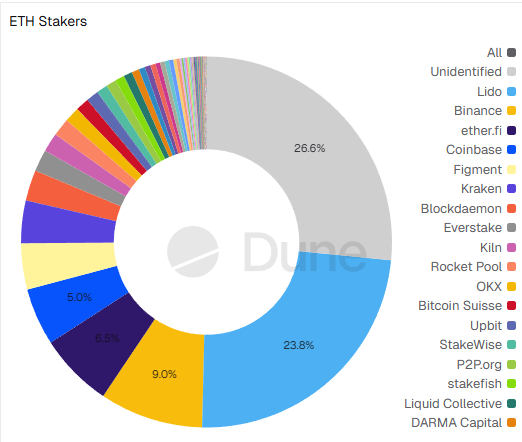

However, given that most nodes are run by a handful of service providers, do we really achieve true separation of block building?

Source: Hildobby, Dune.

Perhaps a needed change is to allow each provider to have only a single subblock per MCP block. The security threshold isn’t that hard to reach for a set of colluding staking providers.

Essentially, by having MCP, you decentralise the sequencer/builder (since we go back to validators ordering blocks) and remove the centralising bottleneck of before (singular leader).

Something we’d love to see explored more in-depth is also the MEV in delayed execution environments (especially Monad)